Posts tagged with Software Engineering

Is Ralph Wiggum the Future of Coding?

Autonomous AI coding doesn't fail because models aren't smart enough. It fails because we give them too much context, vague goals, and no hard definition of success. The Ralph Wiggum approach flips that on its head. Short contexts, brutally clear tasks, hard completion signals, and relentless...

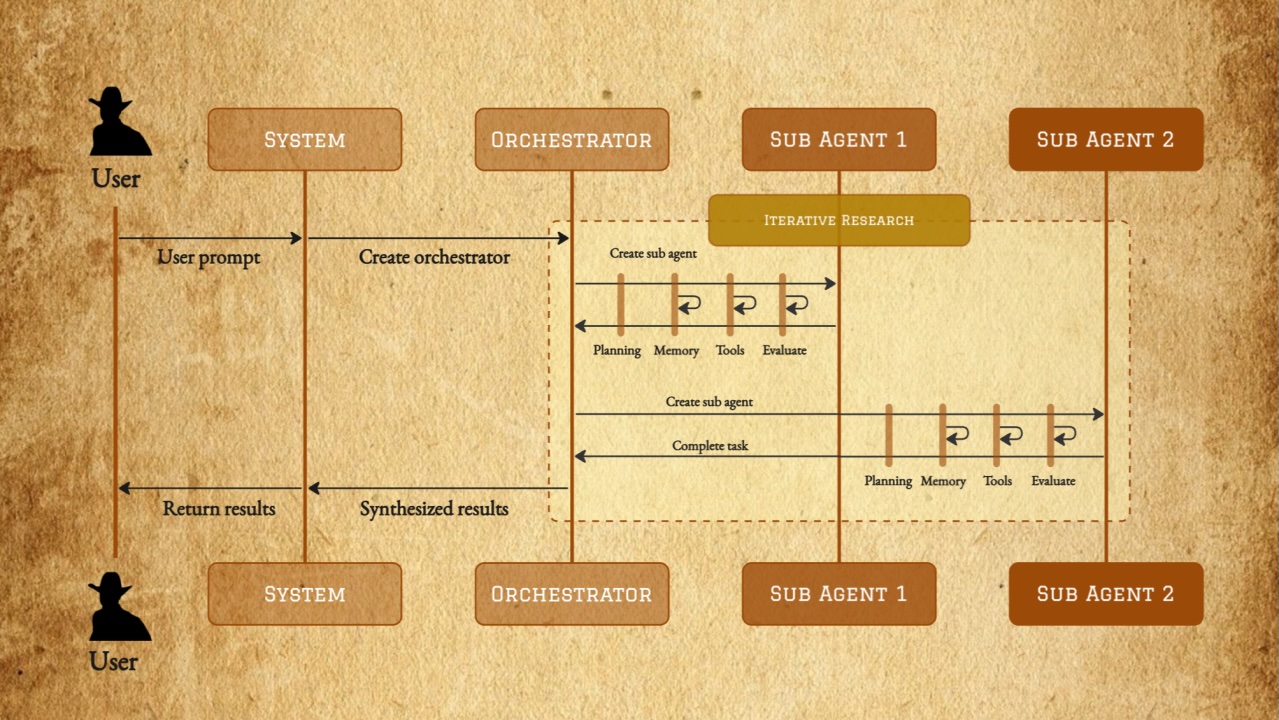

The Next AI Breakthrough Is Old-Fashioned Software Engineering

The next AI breakthrough won't be smarter models but reliable ones. Like self-driving cars, progress of AI agents means consistency over demos. The future of AI lies in disciplined software engineering, building agents that work safely and predictably every time, not just sometimes.

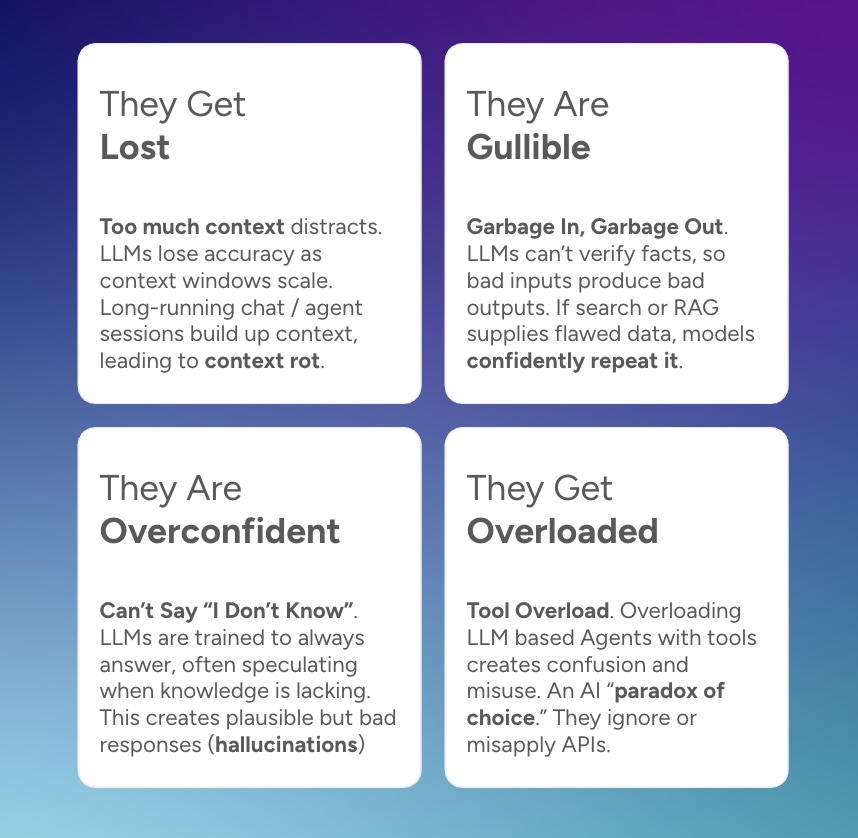

The 4 Ways LLMs Fail

Large language models (LLMs) and AI agents that use them often get lauded as magic. But anyone using them in production or serious applications quickly learns how often they fail. What we commonly call hallucinations, workslop, or vulnerabilities are not random bugs. They tend to cluster into...

No, RAG Isn't Dead, It Just Leveled Up As Context Engineering

There’s been a lot of recent buzz around whether Retrieval Augmented Generation (RAG) has reached its limits. Is RAG truly dead or just replaced by new approaches like search agents, MCP, or massive context windows? That's still retrieval-augmented generation under a new name.

LLM Routers - The AI Dispatchers You Didn't Know You Needed

Most AI models aren't generalists—they're specialists. With over 200,000 LLMs available, choosing just one model for your AI product won't cut it. Enter LLM routers - technology that routes each task to the model best suited to handle it. Discover how routers can cut costs by 85%, improve spe...

Building a Perplexity Clone with 100 lines of code

AI search apps like Perplexity.ai are really cool. They use an LLM to answer your questions, but pull in real-time search results to augment the answer (eg RAG) and list citations. I wanted to know how it works and decided to build my own version. Getting it to work is surprisingly simple and...

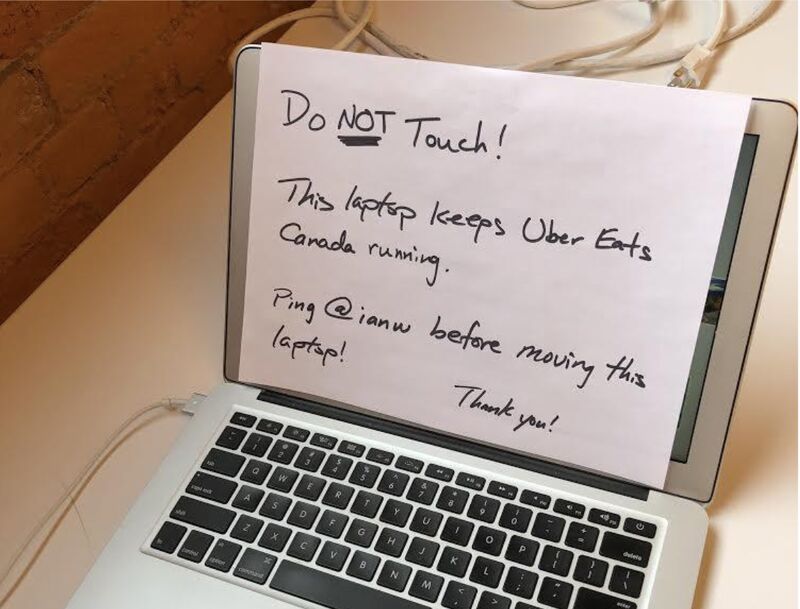

Do Things That Don't Scale

Launched in 2015 in Toronto, Uber Eats was the new kid on the block. With a scrappy tech stack and few tools, Operators hustled using spreadsheets and scripts to keep markets running. It was the ultimate "do things that don’t scale" moment that powered growth and shaped our future systems.

Code for your Fellow Humans

According to the classic book Clean Code, the ratio of time spent reading code to writing code is well over 10 to 1. And most of the time "writing" code is for maintenance reasons. So writing any "brand new" code represents a tiny fraction of a developer's day. It's critical to get that right.

The Power of Pre-Mortems in Software Development

The software post-mortem is well known. It’s a standard best practice that really marks the end of any software project. It also fits in well with the Agile manifesto, specifically the retrospective - always be reflecting, always be improving. Post-mortems are usually only conducted when thin...

Building Smarter Fantasy Football Projections with Machine Learning

Fantasy football has evolved from a casual hobby into a data-driven pursuit where success often hinges on having access to the best projections and analytics. As a software engineer and long-time fantasy football fan, I’ve been developing advanced machine learning techniques to create far mor...

So, You Want to Move to Microservices?

As I reflected back on my time at LinkedIn, I put together a brief history of its scaling story. We had done the (now) classic migration from monolith to microservices. Just like oh I dunno, Amazon, Google, eBay, Twitter, Netflix, and my current employer Uber (to name a few). And why not? Mic...

Code Reviews by Phase and Expectations

Code reviews are amazing for many reasons. And everyone on the team should contribute. Interestingly, the behavior of an engineer with respect to code reviews changes based on seniority or tenure within a team or code repository. I like to refer these changes as “phases”. This document attemp...